Modular Blockchains: A Deep Dive

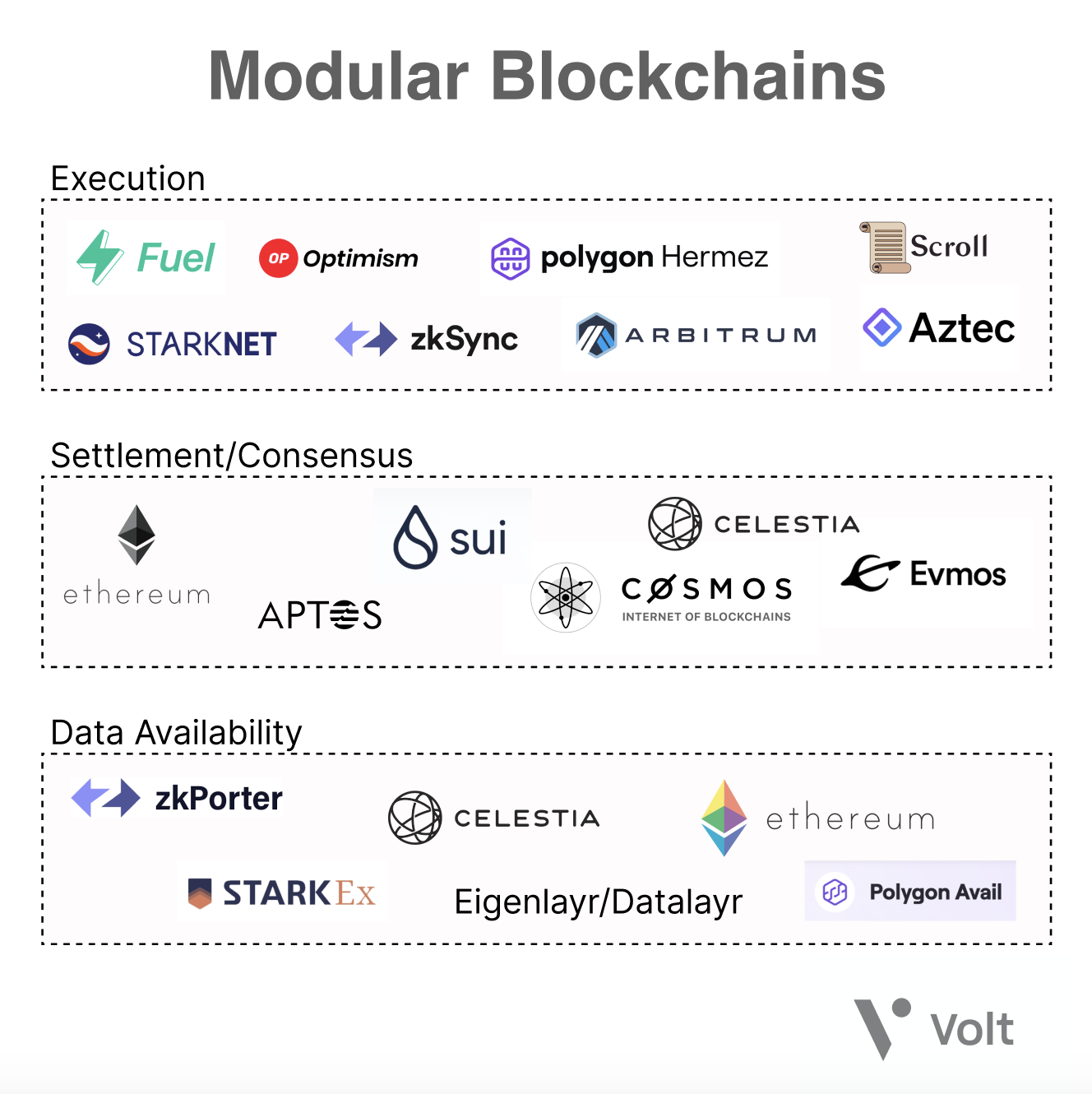

The idea of a “modular blockchain” is becoming a category-defining narrative around scalability and blockchain infrastructure.

The thesis is simple: by disaggregating the core components of a Layer 1 blockchain, we can make 100x improvements on individual layers, resulting in a more scalable, composable, and decentralized system. Before we can discuss modular blockchains at length, we must understand existing blockchain architecture and the limitations blockchains face with their current implementation.

What is a Blockchain?

Let’s briefly recap blockchain basics. Blocks in a blockchain consist of two components: the block header, and the transaction data associated with that header. Blocks are validated through “full nodes”, which parse and compute the entire block data to ensure transactions are valid and that users aren’t sending more ether than their account balance, for instance.

Let’s also briefly outline the “layers” of functionality that compose a blockchain.

- Execution

Transactions and state changes are initially processed here. Users also typically interact with the blockchain through this layer by signing transactions, deploying smart contracts, and transferring assets.

- Settlement

The settlement layer is where the execution of rollups is verified and disputes are resolved. This layer does not exist in monolithic chains and is an optional part of the modular stack. In an analogy to the U.S. court system, think of the Settlement layer as the U.S. Supreme Court, providing final arbitration on disputes.

- Consensus

The consensus layer of blockchains provides ordering and finality through a network of full nodes downloading and executing the contents of blocks, and reaching consensus on the validity of state transitions.

- Data Availability

The data required to verify that a state transition is valid should be published and stored on this layer. This should be easily verifiable in the event of attacks where malicious block producers withhold transaction data. The data availability layer is the main bottleneck for the blockchain scalability trilemma, we’ll explore why later on.

Ethereum, for example, is monolithic, meaning the base layer handles all components mentioned above.

Blockchains currently face a problem called the “Blockchain Scalability Trilemma”. Similar to Brewer’s theorem for distributed systems, blockchain architecture typically compromises on decentralization, security, or scalability in order to provide strong guarantees for the other two.

Security refers to the ability for the network to function under attack. This principle is a core tenet of blockchains and should never be compromised, so the real tradeoff is usually between scalability and decentralization.

Let's define decentralization in the context of blockchain systems: in order for a blockchain to be decentralized, hardware requirements must not be a limitation for participation, and the resource requirements of verifying the network should be low.

Scalability refers to a blockchain’s throughput divided by its cost to verify: the ability of a blockchain to handle an increasing amount of transactions while keeping resource requirements for verification low. There are two main ways to increase throughput. First, you can increase the block size, and therefore the capacity of transactions that can be included in a block. Unfortunately, larger block sizes result in centralization of the network as the hardware requirements of running full nodes increases in response to the need for higher computational output. Monolithic blockchains, in particular, run into this issue as an increase in throughput is correlated with an increase in the cost to verify the chain, resulting in less decentralization. Secondly, you can move execution off-chain, shifting the burden of computation away from nodes on the main network while utilizing proofs that allow the verification of computation on-chain.

With a modular architecture, it is possible for blockchains to begin to solve the blockchain scalability trilemma through the principle of separation of concerns. Through a modular execution and data availability layer, blockchains are able to scale throughput while at the same time maintaining properties that make the network trustless and decentralized by breaking the correlation between computation and verification cost. Let’s explore how this is possible by introducing fault proofs, rollups, and how they pertain to the Data Availability problem.

Fault Proofs and Optimistic Rollups

Vitalik states in Endgame that a likely compromise between centralization and decentralization is that the future of block production centralizes towards pools and specialized producers for scalability purposes, while block verification (keeping producers honest) should importantly remain decentralized. This can be achieved through splitting blockchain nodes into full nodes and light clients. There are two problems in which this model is relevant: block validation (verifying that the computation is correct) and block availability (verifying all data has been published). Let’s explore its application in block validation first.

Full nodes download, compute, and verify every transaction in the block, while light clients only download block headers and assume the transactions are valid. Light clients then rely on fault proofs generated by full nodes for transaction validation. This in turn allows light clients to autonomously identify invalid transactions, enabling them to operate under nearly the same security guarantees as a full node. By default, light clients assume state transitions are valid, and can dispute the validity of states through receiving fault proofs. When a node’s state is challenged by a fault proof, consensus is achieved through a full node re-executing the relevant transactions, resulting in the stake of the dishonest node being slashed.

Light clients and the fault proof model are secure under the honest minority assumption, where there exists at least one honest full-node with the full state of the chain submitting fault proofs. This model is particularly relevant for sharded blockchains (such as Ethereum’s architecture post-merge) as validators can opt to run a full node on one shard and light clients on the rest while maintaining 1 of N security guarantees on all shards.

Optimistic rollups utilize this model to securely abstract the blockchain execution layer to sequencers, powerful computers that bundle and execute multiple transactions and periodically post compressed data back to the parent chain. Shifting this computation off-chain (with respect to the parent chain) allows for 10-100x increases in transaction throughput. How can we trust these off-chain sequencers to remain benign? We introduce bonds, tokens that operators must stake to run a sequencer. Since sequencers post transaction data back to the parent chain, we can then use verifiers, nodes that watch for state mismatches between a parent chain and its rollup, to issue fault proofs and subsequently slash the stake of malicious sequencers. Since optimistic rollups utilize fault proofs, they are secure under the assumption that one honest verifier exists in the network. This use of fault proofs is where optimistic rollups derive their name from - state transitions are assumed to be valid until proven otherwise during a dispute period, handled in the settlement layer.

This is how we can scale throughput while minimizing trust: allow computation to become centralized while keeping validation of that computation decentralized.

The Data Availability Problem

While fault proofs are a useful tool to solve decentralized block validation, full nodes are reliant on block availability to generate fault proofs. A malicious block producer may choose to only publish block headers and withhold part or the entirety of the corresponding data, preventing full nodes from verifying and identifying invalid transactions, and thus generating fault proofs. This type of attack is trivial for full nodes to recognize as they can simply download the entire block, and fork away from the invalid chain when they notice inconsistencies or withheld data. However, light clients will continue to track headers of a potentially invalid chain, forking away from full nodes. (Remember that light clients do not download the entire block, and assume state transitions are valid by default.)

This is the essence of the data availability problem as it pertains to fault proofs: light clients must ensure that all transactional data is released in a block before validation, such that full nodes and light clients must automatically agree on the same header for the canonical chain. (If you’re wondering why we can’t use a similar system to fault proofs for data availability, you can read about the data withholding dilemma in depth here. Essentially, game theory dictates that a fault proof-based system utilized here would be exploited and result in a lose-lose situation for honest actors.)

Solutions

It may seem like we’re back to square one. How can light clients ensure all transactional data in a block is released without having to download the entire block - centralizing hardware requirements and thus defeating the purpose of light clients?

One way this can be achieved is through a mathematical primitive called erasure coding. By duplicating the bytes in a block, erasure coding enables the reconstruction of the entire block even if a percentage of data goes missing. This technology is utilized to perform data availability sampling, allowing light clients to probabilistically determine the entirety of a block has been published by randomly sampling small portions of the block. This allows light clients to ensure all transaction data is included in a particular block before accepting it as valid and following the corresponding block header. However, there are a few caveats to this technique: data availability sampling has high latency, and similar to the honest minority assumption, the security guarantee relies on the assumption that there are sufficient light clients performing sampling to be able to probabilistically determine the availability of a block.

Validity Proofs and Zero Knowledge Rollups

Another solution for decentralized block validation is to do away with needing transaction data for state transitions. Validity proofs, in contrast, assume a more pessimistic view compared to fault proofs. By removing the dispute process, validity proofs can guarantee the atomicity of all state transitions with the trade-off of requiring a proof for each and every state transition. This is accomplished through utilizing novel zero knowledge technologies SNARKs and STARKs. Compared to fault proofs, validity proofs require more computational intensity in exchange for stronger state guarantees, impacting scalability.

Zero Knowledge Rollups are rollups which utilize validity proofs rather than fault proofs for state verification. They follow a similar computation and verification model as optimistic rollups (albeit with validity proofs as the schema instead of fault proofs) through a sequencer/prover model, where sequencers handle computation and provers generate the corresponding proofs. Starknet, for example, launched with centralized sequencers for bootstrapping purposes, and has progressive decentralization of open sequencers and provers on the roadmap. Computations themselves are unbounded on ZK rollups due to off-chain execution on sequencers. However, since proofs for those computations must be validated on-chain, finality is still bottlenecked by proof generation.

It’s important to note that the technique of utilizing light clients for state validation only pertains to fault proof architecture. Since state transitions are guaranteed to be valid with validity proofs, transaction data is no longer required for nodes to validate blocks. The data availability problem for validity proofs still exists, however, and is slightly more subtle: despite the guaranteed state, transaction data for validity proofs are still necessary such that nodes are able to update and serve the state transitions to end users. Therefore, rollups utilizing validity proofs are still bound by the data availability problem.

Where We Are Now

Recall Vitalik’s thesis: all roads lead towards centralized block production and decentralized block validation. While we can exponentially increase rollup throughput via advances in block producer hardware, the true scalability bottleneck is block availability rather than block validation. This leads to an important insight: No matter how powerful we make the execution layer or what proof implementation we use, our throughput is ultimately limited by data availability.

One way we currently ensure data availability is by posting blockchain data on-chain. Rollup implementations utilize Ethereum mainnet as a data availability layer, periodically publishing all rollup blocks on Ethereum. The primary issue faced by this stop-gap solution is Ethereum’s current architecture relies on full nodes guaranteeing data availability by downloading the entirety of the block, rather than light clients performing data availability sampling. As we increase block sizes for additional throughput, this inevitably results in increased hardware requirements for the full nodes verifying data availability, centralizing the network.

In the future, Ethereum has plans on moving towards a sharded architecture utilizing data availability sampling, composed of both full nodes and light clients securing the network. (Note - Ethereum sharding technically uses KZG commitments instead of fault proofs, but the data availability problem is relevant regardless.) However, this only solves part of the problem: another fundamental issue faced by rollup architecture is that rollup blocks are dumped to Ethereum mainnet as calldata. This introduces issues down the line as calldata is expensive at scale, bottlenecking L2 users at a cost of 16 gas per byte regardless of rollup transaction batch size.

Validiums are another way to increase scalability and throughput yet maintain data availability guarantees: granular transaction data can be sent off-chain (with respect to the origin) to a data availability committee, PoS guardians, or data availability layer. By shifting data availability from Ethereum calldata to off-chain solutions, validiums bypass the fixed byte gas costs associated with increased rollup usage.

Rollup architecture has also led to the unique insight that blockchains do not need to provide execution or computation itself, but rather simply the function of ordering blocks and guaranteeing the data availability of those blocks. This is the principle design philosophy behind Celestia, the first modular blockchain network. Formerly known as LazyLedger, Celestia began as a “lazy blockchain”, leaving execution and validation to other modular layers, and focuses solely on providing a data availability layer for transaction ordering and data availability guarantees through data availability sampling. Centralized block production and decentralized block verifiers is a core premise behind Celestia’s design: even mobile phones are able to participate as a light client and secure the network. Due to properties of data availability sampling, rollups plugging into Celestia as a data availability layer are able to support higher block sizes (and therefore throughput) as the number of Celestia light nodes grows, while maintaining the same probabilistic guarantees.

Other solutions today include StarkEx, zkPorter, and Polygon Avail, with StarkEx being the only validium currently used in production. Regardless, most validiums include an implicit assumption of trust in the data availability source, whether that’s managed through a trusted committee, guardians, or generalized data availability layer. This trust also suggests that malicious operators can prevent user funds from being withdrawn.

Work in Progress

Modular blockchain architecture is a hotly contested topic in the current crypto landscape. There has been significant pushback on the Celestium vision for a modular blockchain architecture, due to the security concerns and additional trust assumptions associated with a fragmented settlement and data availability layer.

Meanwhile, there have been significant advances being made on all fronts of the blockchain stack: Fuel Labs is working on a parallelized VM on the execution layer, and the team at Optimism is working on sharding, incentivized verification, and a decentralized sequencer. Hybrid optimistic and zero knowledge solutions are also in development.

Ethereum’s development roadmap post-merge includes plans for a unified settlement and data availability layer. Danksharding, specifically, is a promising development in the Ethereum roadmap designed to transform and optimize Ethereum L1 data shards and blockspace into a “data availability engine”, thus allowing L2 rollups to implement low-cost, high-throughput transactions.

Celestia’s un-opinionated architecture also allows a wide range of execution layer implementations to utilize it as a data availability layer, laying the foundational infrastructure for alternative, non-EVM virtual machines like WASM, Starknet, and FuelVM. This shared data availability for a variety of execution solutions allow developers to create trust minimized bridges between Celestia clusters, unlocking cross-chain and inter-ecosystem composability and interoperability similar to what is possible between Ethereum and its rollups.

Volitions, pioneered by Starkware, introduces an innovative solution to the on-chain vs off-chain data availability dilemma: users and developers can choose between using validiums to send transaction data off-chain, or keeping transaction data on-chain, each with their unique benefits and drawbacks.

Additionally, the increased usage and prevalence of layer 2 solutions unlocks layer 3: fractal scaling. Fractal scaling allows application-specific rollups to be deployed on layer 2s - developers can now deploy their applications with full control of their infrastructure, from data availability to privacy. Deploying on a layer 3 also unlocks interoperability between all layer 3 applications on layer 2, instead of the expensive base chain as is the case with application-specific sovereign chains (e.g. Cosmos). Rollups on rollups!

Similar to how web infrastructure evolved from on-premise servers to cloud servers, the decentralized web is evolving from monolithic blockchains and siloed consensus layers to modular, application specific chains with shared consensus layers. Regardless of whichever solution and implementation ends up catching on, one thing is clear: in a modular future, users are the ultimate winners.

What are your thoughts on blockchain architecture? Did I get anything wrong? Feel free to reach out with feedback or questions on Twitter @0xAlec or through email alec@volt.capital.

Many thanks to Nick White, John Adler, Alex Beckett, John Wang, and Osprey for reviewing and providing feedback.

Additional thanks to Paradigm Research, Delphi Research, Polynya, and the team at Celestia for publishing in-depth resources on modular blockchains. You can read more through the links provided below.

https://www.paradigm.xyz/2021/01/almost-everything-you-need-to-know-about-optimistic-rollup

https://blog.celestia.org/ethereum-off-chain-data-availability-landscape/

https://members.delphidigital.io/reports/pay-attention-to-celestia

https://polynya.medium.com/updated-thoughts-on-modular-blockchains-ce1b159fa1b3

https://vitalik.ca/general/2020/08/20/trust.html

https://vitalik.ca/general/2021/12/06/endgame.html